Remote sensing is the acquiring of information from a distance. NASA observes Earth and other planetary bodies via remote sensors on satellites and aircraft that detect and record reflected or emitted energy. Remote sensors, which provide a global perspective and a wealth of data about Earth systems, enable data-informed decision making based on the current and future state of our planet.

- Orbits

- Observing with the Electromagnetic Spectrum

- Sensors

- Resolution

- Data Processing, Interpretation, and Analysis

- Data Pathfinders

For more information, check out NASA's Interagency Implementation and Advanced Concepts Team (IMPACT) Tech Talk: From Pixels to Products: An Overview of Satellite Remote Sensing.

Orbits

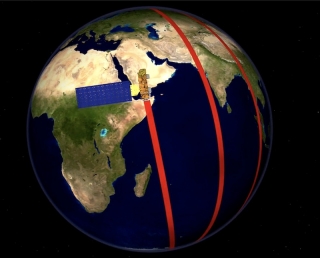

Satellites can be placed in several types of orbits around Earth. The three common classes of orbits are low-Earth orbit (approximately 160 to 2,000 km above Earth), medium-Earth orbit (approximately 2,000 to 35,500 km above Earth), and high-Earth orbit (above 35,500 km above Earth). Satellites orbiting at 35,786 km are at an altitude at which their orbital speed matches the planet's rotation, and are in what is called geosynchronous orbit (GSO). In addition, a satellite in GSO directly over the equator will have a geostationary orbit. A geostationary orbit enables a satellite to maintain its position directly over the same place on Earth’s surface.

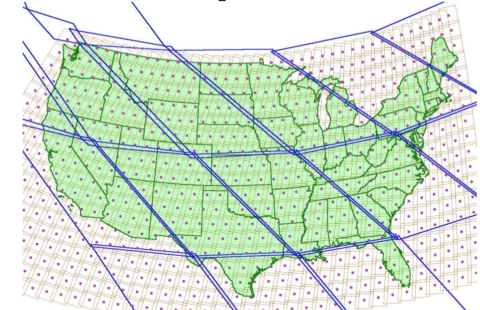

Low-Earth orbit is a commonly used orbit since satellites can follow several orbital tracks around the planet. Polar-orbiting satellites, for example, are inclined nearly 90 degrees to the equatorial plane and travel from pole to pole as Earth rotates. This enables sensors aboard the satellite to acquire data for the entire globe rapidly, including the polar regions. Many polar-orbiting satellites are considered Sun-synchronous, meaning that the satellite passes over the same location at the same solar time each cycle. One example of a Sun-synchronous, polar-orbiting satellite is NASA’s Aqua satellite, which orbits approximately 705 km above Earth’s surface.

Non-polar low-Earth orbit satellites, on the other hand, do not provide global coverage but instead cover only a partial range of latitudes. The joint NASA/Japan Aerospace Exploration Agency Global Precipitation Measurement (GPM) Core Observatory is an example of a non-Sun-synchronous low-Earth orbit satellite. Its orbital track acquires data between 65 degrees north and south latitude from 407 km above the planet.

A medium-Earth orbit satellite takes approximately 12 hours to complete an orbit. In 24-hours, the satellite crosses over the same two spots on the equator every day. This orbit is consistent and highly predictable. As a result, this is an orbit used by many telecommunications and GPS satellites. One example of a medium-Earth orbit satellite constellation is the European Space Agency's Galileo global navigation satellite system (GNSS), which orbits 23,222 km above Earth.

While both geosynchronous and geostationary satellites orbit at 35,786 km above Earth, geosynchronous satellites have orbits that can be tilted above or below the equator. Geostationary satellites, on the other hand, orbit Earth on the same plane as the equator. These satellites capture identical views of Earth with each observation and provide almost continuous coverage of one area. The joint NASA/NOAA Geostationary Operational Environmental Satellite (GOES) series of weather satellites are in geostationary orbits above the equator.

For more information about orbits, please see NASA Earth Observatory's Catalog of Earth Satellite Orbits.

Observing with the Electromagnetic Spectrum

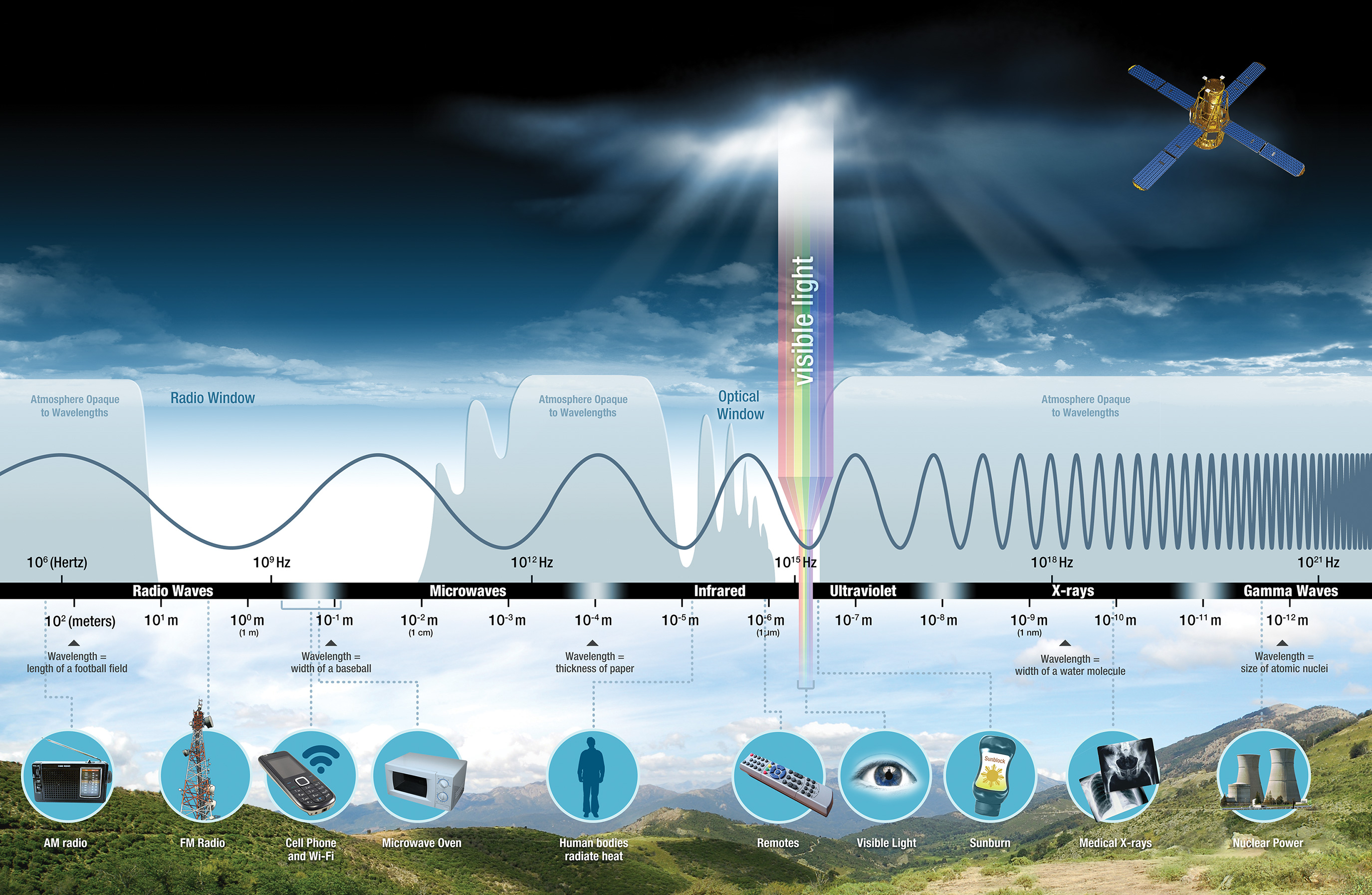

Electromagnetic energy, produced by the vibration of charged particles, travels in the form of waves through the atmosphere and the vacuum of space. These waves have different wavelengths (the distance from wave crest to wave crest) and frequencies; a shorter wavelength means a higher frequency. Some, like radio, microwave, and infrared waves, have a longer wavelength, while others, such as ultraviolet, x-rays, and gamma rays, have a much shorter wavelength. Visible light sits in the middle of that range of long to shortwave radiation. This small portion of energy is all that the human eye is able to detect. Instrumentation is needed to detect all other forms of electromagnetic energy. NASA instrumentation utilizes the full range of the spectrum to explore and understand processes occurring here on Earth and on other planetary bodies.

Some waves are absorbed or reflected by atmospheric components, like water vapor and carbon dioxide, while some wavelengths allow for unimpeded movement through the atmosphere; visible light has wavelengths that can be transmitted through the atmosphere. Microwave energy has wavelengths that can pass through clouds, an attribute utilized by many weather and communication satellites.

The primary source of the energy observed by satellites, is the Sun. The amount of the Sun’s energy reflected depends on the roughness of the surface and its albedo, which is how well a surface reflects light instead of absorbing it. Snow, for example, has a very high albedo and reflects up to 90% of incoming solar radiation. The ocean, on the other hand, reflects only about 6% of incoming solar radiation and absorbs the rest. Often, when energy is absorbed, it is re-emitted, usually at longer wavelengths. For example, the energy absorbed by the ocean gets re-emitted as infrared radiation.

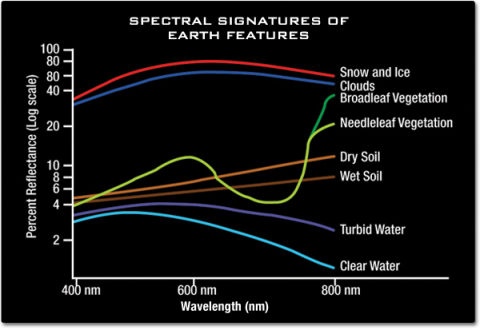

All things on Earth reflect, absorb, or transmit energy, the amount of which varies by wavelength. Just as your fingerprint is unique to you, everything on Earth has a unique spectral fingerprint. Researchers can use this information to identify different Earth features as well as different rock and mineral types. The number of spectral bands detected by a given instrument, its spectral resolution, determines how much differentiation a researcher can identify between materials.

For more information on the electromagnetic spectrum, with companion videos, view NASA's Tour of the Electromagnetic Spectrum.

Sensors

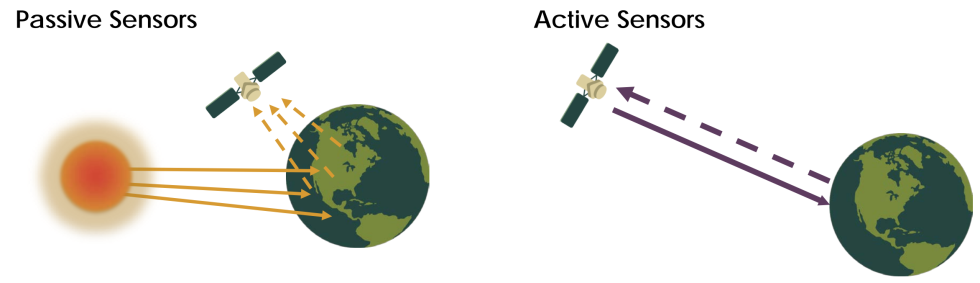

Sensors, or instruments, aboard satellites and aircraft use the Sun as a source of illumination or provide their own source of illumination, measuring energy that is reflected back. Sensors that use natural energy from the Sun are called passive sensors; those that provide their own source of energy are called active sensors.

Passive sensors include different types of radiometers (instruments that quantitatively measure the intensity of electromagnetic radiation in select bands) and spectrometers (devices that are designed to detect, measure, and analyze the spectral content of reflected electromagnetic radiation). Most passive systems used by remote sensing applications operate in the visible, infrared, thermal infrared, and microwave portions of the electromagnetic spectrum. These sensors measure land and sea surface temperature, vegetation properties, cloud and aerosol properties, and other physical attributes. Most passive sensors cannot penetrate dense cloud cover and thus have limitations observing areas like the tropics where dense cloud cover is frequent.

Active sensors include different types of radio detection and ranging (radar) sensors, altimeters, and scatterometers. The majority of active sensors operate in the microwave band of the electromagnetic spectrum, which gives them the ability to penetrate the atmosphere under most conditions. These types of sensors are useful for measuring the vertical profiles of aerosols, forest structure, precipitation and winds, sea surface topography, and ice, among others.

The Remote Sensors Earthdata page provides a list of NASA’s Earth science passive and active sensors while the What is Synthetic Aperture Radar? Backgrounder provides specific information on this type of active sensor.

Resolution

Resolution plays a role in how data from a sensor can be used. Resolution can vary depending on the satellite’s orbit and sensor design. There are four types of resolution to consider for any dataset—radiometric, spatial, spectral, and temporal.

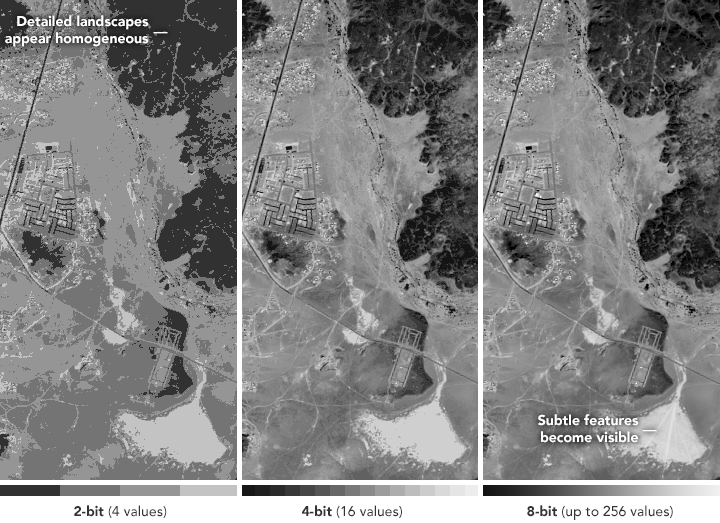

Radiometric resolution is the amount of information in each pixel, that is, the number of bits representing the energy recorded. Each bit records an exponent of power 2. For example, an 8 bit resolution is 28, which indicates that the sensor has 256 potential digital values (0-255) to store information. Thus, the higher the radiometric resolution, the more values are available to store information, providing better discrimination between even the slightest differences in energy. For example, when assessing water quality, radiometric resolution is necessary to distinguish between subtle differences in ocean color.

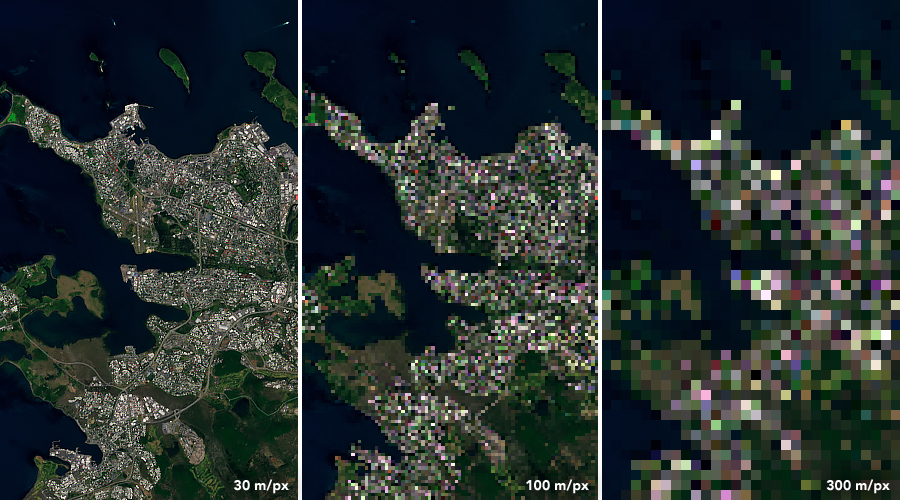

Spatial resolution is defined by the size of each pixel within a digital image and the area on Earth’s surface represented by that pixel.

For example, the majority of the bands observed by the Moderate Resolution Imaging Spectroradiometer (MODIS) have a spatial resolution of 1km; each pixel represents a 1 km x 1km area on the ground. MODIS also includes bands with a spatial resolution of 250 m or 500 m. The finer the resolution (the lower the number), the more detail you can see. In the image below, you can see the difference in pixelation between a 30 m/pixel image (left image), a 100 m/pixel image (center image), and a 300 m/pixel image (right image).

Spectral resolution is the ability of a sensor to discern finer wavelengths, that is, having more and narrower bands. Many sensors are considered to be multispectral, meaning they have 3-10 bands. Some sensors have hundreds to even thousands of bands and are considered to be hyperspectral. The narrower the range of wavelengths for a given band, the finer the spectral resolution. For example, the Airborne Visible/Infrared Imaging Spectrometer (AVIRIS) captures information in 224 spectral channels. The cube on the right represents the detail within the data. At this level of detail, distinctions can be made between rock and mineral types, vegetation types, and other features. In the cube, the small region of high response in the right corner of the image is in the red portion of the visible spectrum (about 700 nanometers), and is due to the presence of 1-centimeter-long (half-inch) red brine shrimp in the evaporation pond.

Temporal resolution is the time it takes for a satellite to complete an orbit and revisit the same observation area. This resolution depends on the orbit, the sensor’s characteristics, and the swath width. Because geostationary satellites match the rate at which Earth is rotating, the temporal resolution is much finer. Polar orbiting satellites have a temporal resolution that can vary from 1 day to 16 days. For example, the MODIS sensor aboard NASA's Terra and Aqua satellites has a temporal resolution of 1-2 days, allowing the sensor to visualize Earth as it changes day by day. The Operational Land Imager (OLI) aboard the joint NASA/USGS Landsat 8 satellite, on the other hand, has a narrower swath width and a temporal resolution of 16 days; showing not daily changes but bi-monthly changes.

Why not build a sensor combining high spatial, spectral, and temporal resolution? It is difficult to combine all of the desirable features into one remote sensor. For example, to acquire observations with high spatial resolution (like OLI, aboard Landsat 8) a narrower swath is required, which requires more time between observations of a given area resulting in a lower temporal resolution. Researchers have to make trade-offs. This is why it is very important to understand the type of data needed for a given area of study. When researching weather, which is dynamic over time, a high temporal resolution is critical. When researching seasonal vegetation changes, on the other hand, a high temporal resolution may be sacrificed for a higher spectral or spatial resolution.

Data Processing, Interpretation, and Analysis

Remote sensing data acquired from instruments aboard satellites require processing before the data are usable by most researchers and applied science users. Most raw NASA Earth observation satellite data (Level 0, see data processing levels) are processed at NASA's Science Investigator-led Processing Systems (SIPS) facilities. All data are processed to at least a Level 1, but most have associated Level 2 (derived geophysical variables) and Level 3 (variables mapped on uniform space-time grid scales) products. Many even have Level 4 products. NASA Earth science data are archived at discipline-specific Distributed Active Archive Centers (DAACs) and are available fully, openly, and without restriction to data users.

Most data are stored in Hierarchical Data Format (HDF) or Network Common Data Form (NetCDF) format. Numerous data tools are available to subset, transform, visualize, and export to various other file formats.

Once data are processed, they can be used in a variety of applications, from agriculture to water resources to health and air quality. A single sensor will not address all research questions within a given application. Users often need to leverage multiple sensors and data products to address their question, bearing in mind the limitations of data provided by different spectral, spatial, and temporal resolutions.

Creating Satellite Imagery

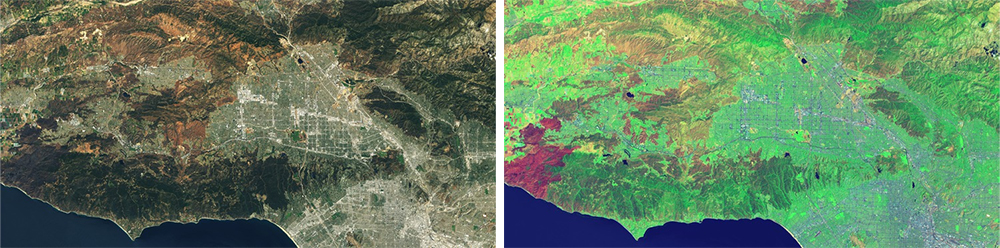

Many sensors acquire data at different spectral wavelengths. For example, Band 1 of the OLI aboard Landsat 8 acquires data at 0.433-0.453 micrometers while the MODIS Band 1 acquires data at 0.620-0.670 micrometers. OLI has a total of 9 bands whereas MODIS has 36 bands, all measuring different regions of the electromagnetic spectrum. Bands can be combined to produce imagery of the data to reveal different features in the landscape. Often imagery of data are used to distinguish characteristics of a region being studied or to determine an area of study.

True-color images show Earth as it appears to the human eye. For a Landsat 8 OLI true-color (red, green, blue [RGB]) image, the sensor Bands 4 (Red), 3 (Green), and 2 (Blue) are combined. Other spectral band combinations can be used for specific science applications, such as flood monitoring, urbanization delineation, and vegetation mapping. For example, creating a false-color Visible Infrared Imaging Radiometer Suite (VIIRS, aboard the Suomi National Polar-orbiting Partnership [Suomi NPP] satellite) image using bands M11, I2, and I1 is useful for distinguishing burn scars from low vegetation or bare soil as well as for exposing flooded areas. To see more band combinations from Landsat sensors, check out NASA Scientific Visualization Studio's video Landsat Band Remix or the NASA Earth Observatory article Many Hues of London. For other common band combinations, see NASA Earth Observatory's How to Interpret Common False-Color Images, which provides common band combinations along with insight into interpreting imagery.

Image Interpretation

Once data are processed into imagery with varying band combinations these images can aid in resource management decisions and disaster assessment. This requires proper interpretation of the imagery. There are a few strategies for getting started (adapted from NASA Earth Observatory article How to Interpret a Satellite Image: Five Tips and Strategies):

- Know the scale — there are different scales based on the spatial resolution of the image and each scale provides different features of importance. For example, when tracking a flood, a detailed, high-resolution view will show which homes and businesses are surrounded by water. The wider landscape view shows which parts of a county or metropolitan area are flooded and perhaps the source of the water. An even broader view would show the entire region—the flooded river system or the mountain ranges and valleys that control the flow. A hemispheric view would show the movement of weather systems connected to the floods.

- Look for patterns, shapes and textures — many features are easy to identify based on their pattern or shape. For example, agricultural areas are generally geometric in shape, usually circles or rectangles. Straight lines are typically human created structures, like roads or canals.

- Define colors — when using color to distinguish features, it’s important to know the band combination used in creating the image. True- or natural-color images are created using band combinations that replicate what we would see with our own eyes if looking down from space. Water absorbs light so it typically appears black or blue in true-color images; sunlight reflecting off the water surface might make it appear gray or silver. Sediment can made water color appear more brown, while algae can make water appear more green. Vegetation ranges in color depending on the season: in the spring and summer, it’s typically a vivid green; fall may have orange, yellow, and tan; and winter may have more browns. Bare ground is usually some shade of brown, although this depends on the mineral composition of the sediment. Urban areas are typically gray from the extensive use of concrete. Ice and snow are white in true-color imagery, but so are clouds. When using color to identify objects or features, it’s important to also use surrounding features to put things in context.

- Consider what you know — having knowledge of the area you are observing aids in the identification of these features. For example, knowing that an area was recently burned by a wildfire can help determine why vegetation may appear different in a remotely-sensed image.

Quantitative Analysis

Different land cover types can be discriminated more readily by using image classification algorithms. Image classification uses the spectral information of individual image pixels. A program using image classification algorithms can automatically group the pixels in what is called an unsupervised classification. The user can also indicate areas of known land cover type to “train” the program to group like pixels; this is called a supervised classification. Maps or imagery can also be integrated into a geographical information system (GIS) and then each pixel can be compared with other GIS data, such as census data. For more information on integrating NASA Earth science data into a GIS, check out the Earthdata GIS page.

Satellites also often carry a variety of sensors measuring biogeophysical parameters, such as sea surface temperature, nitrogen dioxide or other atmospheric pollutants, winds, aerosols, and biomass. These parameters can be evaluated through statistical and spectral analysis techniques.

Data Pathfinders

To aid in getting started with applications-based research using remotely-sensed data, Data Pathfinders provide a data product selection guide focused on specific science disciplines and application areas, such as those mentioned above. Pathfinders provide direct links to the most commonly-used datasets and data products from NASA’s Earth science data collections along with links to tools that provide ways to visualize or subset the data, with the option to save the data in different file formats.